The Data Warehouse Institute Seminar

A couple of years ago I had the good fortune to attend a session with Arkady Maydanchik, one of the world’s leading authorities in Data Warehouse data quality.

Arkady is an accomplished speaker and the session was both informative and easy to follow. What the session did well was to simply describe the aspects of monitoring and improving data quality and how to build the mechanisms to achieve this.

What is Data Warehouse Data Quality?

For the purposes of this blog we are going to focus on monitoring and managing the quality of the data that is being loaded from the source systems in the Data Warehouse via ETL (extraction, transformation and loading) routines.

Some industry players argue that the quality of the data loaded into data warehouses can degrade by up to 2% a month. This can mean that the quality of your fresh new data warehouse can be severely compromised in a short amount of time.

There are a number of reasons why the quality of the data degrades, however one major effect is that small quality issues in the source systems can have major impacts in the data warehouse. Most ETL routines have not been designed to help monitor and manage the quality issues as they occur meaning the data quality issues remain hidden until they become large enough to be noticed. Arkady has designed monitors that measure and record change in quality of the data as it is loaded. The data warehouse administrators will then get a data quality dashboard so they can proactively identify and correct data quality issues as they occur.

Data Quality monitors

The idea is based on Demmings TQM (Total Quality Management) principles where you measure the quality throughout the process, not at the end, and then manage what you measure.

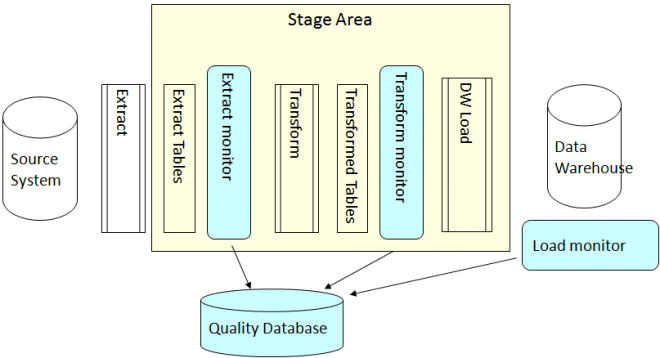

Data Quality monitors work on the principle that at certain points in the ETL load cycle measurements of quality are recorded into a centralised Audit Database. These measurements may be a simple as volumes of rows, dollar values, etc or more complex statistical algorithms depending on what is most appropriate to measure. Over time you can build up a trend of how the quality your data is progressing.

The diagram below indicates where data quality monitors could be implemented in a simple ETL routine.

There are also a number of simple algorithms that can be built into the monitors to enhance the value of the results and help you better understand the data quality.

Based on the output of the monitors you have built you can then determine what needs to be investigated, fixed and at what point in the process this needs to be done.

In most cases the overhead in building these mechanisms is very small as they are developed at the same time as the ETL, yet the payback is great.

At one of the customer sites where I have implemented these ideas the number of issues relating to data loads has been significantly reduced as we can determine very quickly where the issues are and then focus on fixing it, rather than spend time trying to follow data through the process to determine where the issue is.

Implementing Data Quality monitors is one of many ideas that will help you gain the maximum value from your data warehouse investment, while future proofing and reducing the maintenance efforts going forward.